Single layer perceptron is the first proposed neural model created. The content of the local memory of the neuron consists of a vector of weights. The computation of a single layer perceptron is performed over the calculation of sum of the input vector each with the value multiplied by corresponding element of vector of the weights. The value which is displayed in the output will be the input of an activation function.

Algorithm:

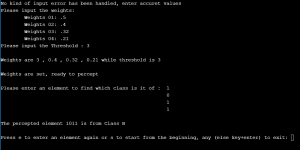

- Initialize all weights and threshold and values.

- Get the weighted sum of one input.

- Compare the weighted sum with the threshold and set value to output.

- If the input is of class A

- If the desired output mismatches with the output

- Decrease those weights which have corresponding 1 in the input

- Else Take the next input and go to Step 2

- If the desired output mismatches with the output

- Else If the desired output mismatches with the output

- Increase those weights which have corresponding 1 in the input

- Else Take the next input and go to Step 2

- If the input is of class A

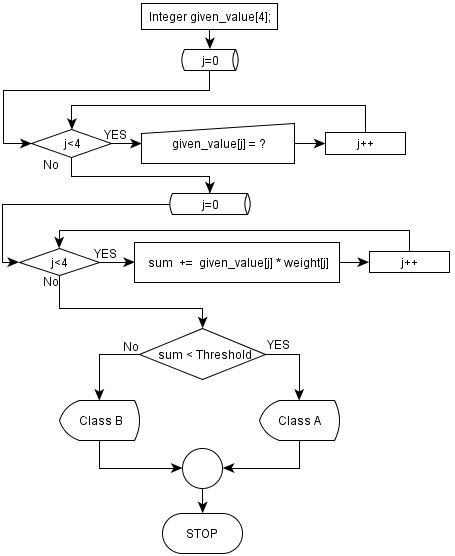

- While all weights has been set, take input from the user.

- Get the weighted sum for this input

- Compare the weighted sum with the threshold

- If weighted sum is less then the threshold

- input is from Class A

- Else input is from Class B

- If weighted sum is less then the threshold

Flowchart of the Program:

Part 01:

Click on image to see enlarged view

Part 02:

Source Code:

You can run the code using the RUN button at the end of the code

Link to source code https://www.onlinegdb.com/

Output:

It was an assignment when I was having the course Theory of Computation. I don’t think this blog post has simplified the matter at all. However if it helps you anyway, I will be glad to know that.